•

the Blog

Once upon a BLAST

By Guillaume Filion, filed under

BLAST,

series: focus on,

sequence alignment,

bioinformatics.

• 30 June 2014 •

The story of this post begins a few weeks ago when I received a surprising email. I have never read a scientific article giving a credible account of a research process. Only the successful hypotheses and the successful experiments are mentioned in the text — a small minority — and the painful intellectual labor behind discoveries is omitted altogether. Time is precious, and who wants to read endless failure stories? Point well taken. But this unspoken academic pact has sealed what I call the curse of research. In simple words, the curse is that by putting all the emphasis on the results, researchers become blind to the research process because they never discuss it. How to carry out good research? How to discover things? These are the questions that nobody raises (well, almost nobody).

The story of this post begins a few weeks ago when I received a surprising email. I have never read a scientific article giving a credible account of a research process. Only the successful hypotheses and the successful experiments are mentioned in the text — a small minority — and the painful intellectual labor behind discoveries is omitted altogether. Time is precious, and who wants to read endless failure stories? Point well taken. But this unspoken academic pact has sealed what I call the curse of research. In simple words, the curse is that by putting all the emphasis on the results, researchers become blind to the research process because they never discuss it. How to carry out good research? How to discover things? These are the questions that nobody raises (well, almost nobody).

Where did I leave off? Oh, yes... in my mailbox lies an email from David Lipman. For those who don’t know him, David Lipman is the director of the NCBI (the bio-informatics spearhead of the NIH), of which PubMed and GenBank are the most famous children. Incidentally, David is also the creator of BLAST. After a brief exchange on the topic of my previous...

June babies and bioinformatics

By Guillaume Filion, filed under

extreme value theory,

Gumbel,

bioinformatics,

extreme value fallacy.

• 06 April 2014 •

In July 1982, paleontologist Steven Jay Gould was diagnosed with cancer. Facing a median prognosis of only 8 months survival, he used his knowledge of statistics to prepare for the future. As he explains in The Median Isn’t the Message, if half of the patients died of this rare case of mesothelioma within 8 months, those who did not had much better survival. Evaluating his own chances of being in the “survivor” group as high, he planned for long term survival and opted out of the standard treatment. He died 20 years later, from an unrelated disease.

In July 1982, paleontologist Steven Jay Gould was diagnosed with cancer. Facing a median prognosis of only 8 months survival, he used his knowledge of statistics to prepare for the future. As he explains in The Median Isn’t the Message, if half of the patients died of this rare case of mesothelioma within 8 months, those who did not had much better survival. Evaluating his own chances of being in the “survivor” group as high, he planned for long term survival and opted out of the standard treatment. He died 20 years later, from an unrelated disease.

If not the median, then what is the message? Statistics put a disproportionate emphasis on the typical or average behavior, when what matters is sometimes in the extremes. This general blindness to the extremes is responsible for a dreadful lot of confusion in the bio-medical field. One of my all time favorite traps is the extreme value fallacy. Nothing better than an example will explain what it is about.

June babies and anorexia

If the perfect mistake was ever made, it definitely was in an article about anorexia published in 2001. A more accessible version of the story was...

How to write a cover letter

By Guillaume Filion, filed under

PhD,

post-doc.

• 15 March 2014 •

In the first call for post-docs in my lab, I realized that most applicants did not have a clear idea of what to write in a cover letter. My technicians and I read them all with great frustration. Retrospectively, this is not so surprising, given that nobody teaches you how to write a cover letter in academia. Since it may be useful for the community, I am going to explain how I read a cover letter and what I would like to see there.

What is a cover letter for?

From the principal investigator’s perspective, a cover letter is to get some insight into your motivations.

From the principal investigator’s perspective, a cover letter is to get some insight into your motivations.

Several candidates think that the cover letter is the place to argue why you should hire them. Others use it as a chance to translate their CV into English prose. None of this will bring you very far. All you need to do is give enough information about yourself to let the employer know whether you have the profile they are looking for. At the same time, you want to demonstrate a proactive attitude.

Before you write, ask yourself what drives you, why you started this career. Is that ambition...

Four illusions

By Guillaume Filion, filed under

motivation,

illusions,

leadership,

management.

• 28 February 2014 •

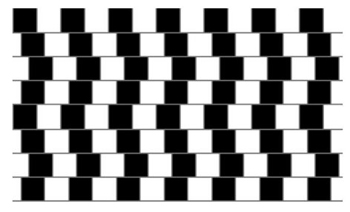

Optical illusions come in two flavors. The one shown on the left is a classical example of a “false” perception. It looks like the grey lines are curved, but they are actually perfectly horizontal. Even if we know that the lines are horizontal, we cannot force ourselves to see them this way. I find it somewhat of a miracle that reason is strong enough to tell that the eyes are lying, but still, however long you stare at the picture, you will never see it the right way. The brain cannot learn to see this picture.

Optical illusions come in two flavors. The one shown on the left is a classical example of a “false” perception. It looks like the grey lines are curved, but they are actually perfectly horizontal. Even if we know that the lines are horizontal, we cannot force ourselves to see them this way. I find it somewhat of a miracle that reason is strong enough to tell that the eyes are lying, but still, however long you stare at the picture, you will never see it the right way. The brain cannot learn to see this picture.

The second flavor of optical illusions is shown on the right. This picture may either represent the face of a woman, or a saxophone player. Most people immediately see one of those, and it may take them some time to see the other. But once they see it, there is no way to “unsee” it. The brain has completely forgotten how it feels to not see it and cannot unlearn to see the picture.

The second flavor of optical illusions is shown on the right. This picture may either represent the face of a woman, or a saxophone player. Most people immediately see one of those, and it may take them some time to see the other. But once they see it, there is no way to “unsee” it. The brain has completely forgotten how it feels to not see it and cannot unlearn to see the picture.

Vision is not the only sense that can be fooled. As a matter of fact, what is so special about optical illusions is that we realize that something...

So you want to be a group leader

By Guillaume Filion, filed under

motivation,

leadership,

management.

• 21 January 2014 •

People often ask me whether it is difficult to be a group leader. I usually answer “No that’s easy. Being a good group leader... that’s another story.” It is one of these jobs for which there is no training. It is also one of these jobs about which there is no information. As the leader of a team of scientists, you will have to face your problems alone, because scientific leadership is...

The best kept secret in the world

The need for solid leadership skills is fully acknowledged in the civilized world, but in academic research this culture is lagging behind. So far behind that we have to admit the plain truth: leadership in science is a taboo. Understandably, academic researchers are reluctant to share their experience because they have little authority to do so, and because the information is often confidential. I could tell how I solved “the case of a post-doc of mine who put his music too loud in the lab”, but since all my colleagues would identify this person, I would end up with a serious issue in my team.

The need for solid leadership skills is fully acknowledged in the civilized world, but in academic research this culture is lagging behind. So far behind that we have to admit the plain truth: leadership in science is a taboo. Understandably, academic researchers are reluctant to share their experience because they have little authority to do so, and because the information is often confidential. I could tell how I solved “the case of a post-doc of mine who put his music too loud in the lab”, but since all my colleagues would identify this person, I would end up with a serious issue in my team.

There are also systemic reasons for this lack of culture. Academic...

Infinite expectations

By Guillaume Filion, filed under

diffusion,

probability,

black swans,

brownian motion.

• 06 January 2014 •

In the first days of my PhD, I sincerely believed that there was a chance I would find a cure against cancer. As this possibility became more and more remote, and as it became obvious that my work would not mark a paradigm shift, I became envious of those few people who did change the face of science during their PhD. One of them is Andrey Kolmogorov, whose PhD work was nothing less than the modern theory of probability. His most famous result was the strong law of large numbers, which essentially says that random fluctuations become infinitesimal on average. Simply put, if you flip a fair coin a large number of times, the frequency that ‘tails’ turn up will be very close to the expected value 1/2.

The chaos of large numbers

Most fascinating about the strong law of large numbers is that it is a theorem, which means that it comes with hypotheses that do not always hold. There are cases that repeating a random experiment a very large number of times does not guarantee that you will get closer to the expected value — I wrote the gory detail on Cross Validated, for those interested...

Most fascinating about the strong law of large numbers is that it is a theorem, which means that it comes with hypotheses that do not always hold. There are cases that repeating a random experiment a very large number of times does not guarantee that you will get closer to the expected value — I wrote the gory detail on Cross Validated, for those interested...

Who understands the histone code?

By Guillaume Filion, filed under

epigenetics,

histone code,

position effect variegation.

• 30 November 2013 •

The most annoying thing about us biologists is that we keep using words that we don’t understand. “Epigenetics” is one of those that has drawn my attention for several years, as I explained in my last post. I suggested that the invasion started in 2001, the year that the histone code hypothesis was proposed by Thomas Jenuwein and David Allis in a seminal paper entitled Translating the Histone Code.

The histone code hypothesis was arguably the most influential concept of the last decade in molecular biology. Yet, most biologists would be hard pressed to say what the hypothesis is. All you have to do is read what Thomas Jenuwein and David Allis actually wrote. But believe it or not, this blog is one of the only places on the Internet where the histone code hypothesis is spelled out clearly. Most sources, including the Wikipedia article diverge substantially from the original statement, which is the following.

The histone code hypothesis was arguably the most influential concept of the last decade in molecular biology. Yet, most biologists would be hard pressed to say what the hypothesis is. All you have to do is read what Thomas Jenuwein and David Allis actually wrote. But believe it or not, this blog is one of the only places on the Internet where the histone code hypothesis is spelled out clearly. Most sources, including the Wikipedia article diverge substantially from the original statement, which is the following.

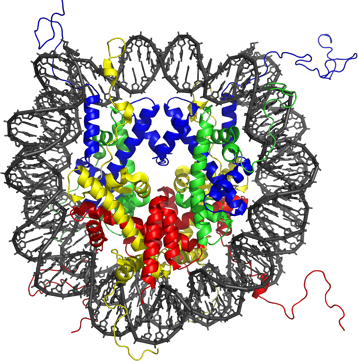

Distinct qualities of higher order chromatin, such as euchromatic or heterochromatic domains, are largely dependent on the local concentration and combination of differentially modified nucleosomes.

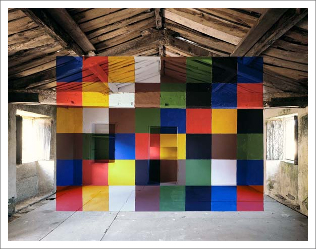

DNA in the nucleus comes in a structure called the nucleosome. The picture above is a molecular...

The rise of epigenetics

By Guillaume Filion, filed under

PubMed,

histone code,

chromatin,

epigenetics.

• 13 October 2013 •

I started to study biology at the time epigenetics became a buzzword. I first heard the term at university in 2001, and as many young enthusiastic people of the time, I did my PhD on epigenetics because it was cool. But buzzes come and go, I finished my PhD and I got bored with epigenetics. Meanwhile, I thought that my interest had been mirroring that of the community, and that the trend was towards a loss of interest for epigenetics. I was about to write a blog post entitled “The death of epigenetics” when I did a quick PubMed search and realized that the peak of popularity was... 2013. Epigenetics is not dead, it is on the rise!

Above is the number* of PubMed hits for “epigenetics” per month since 1996, with “chromatin” shown as a reference for comparison. PubMed now displays a histogram of the occurrence of your search term over the years (check here for epigenetics). The growth is not due to articles published in late-adopting journals, since the trend-setters Cell, Nature and Science published more than half of their papers labelled “epigenetics” in the last three years.

What is epigenetics anyway?

One of...

Meet planktonrules

By Guillaume Filion, filed under

interview,

planktonrules,

series: IMDB reviews.

• 15 September 2013 •

Some of you may remember planktonrules from my series on IMDB reviews. For those of you who missed it, planktonrules is an outlier. In my attempt to understand what IMDB reviewers call a good movie, I realized that one reviewer in particular had written a lot of reviews. When I say a lot, I mean 14,800 in the last 8 years. With such a boon, I could not resist the temptation to use his reviews to analyze the variation of style between users, and to build a classifier that recognizes his reviews.

I finally got in contact with Martin Hafer (planktonrule’s real name) this year, and since he had planned to visit Barcelona, we set up a meeting in June. I have to admit that I expected him to be a sort of weirdo, or a cloistered sociopath. The reality turned out to be much more pleasant; we had an entertaining chat, speaking very little about movie reviews. He also pointed out to me that doing statistics on what people write on the Internet is a bit weird... True that.

Anyway, as an introduction, here is a mini interview of planktonrules. You can find out more...

How to stop sucking and be awesome instead

By Guillaume Filion, filed under

motivation,

PhD.

• 08 June 2013 •

I recently gave a motivation speech at the CRG/Institut Curie international PhD retreat. There was only one slide and the content was fairly general, so I thought I could reproduce it here. My goal was to motivate people, but also to surprise them a little, especially at the end. Finally, I wish such a nice title were mine, but I have to acknowledge Jeff Atwood. I stole it from his post on Coding Horror (which I also invite you to read).

How to stop sucking and be awesome instead

Think about what we can do today. We can send people on the moon. We can talk to each other any time anywhere on the planet. We can go anywhere in about a day. We can transplant a heart. We can cure diseases that were fatal only 30 years ago. And yet, there is still one thing that we cannot do. We don’t know how to motivate people.

That’s right, we do not know how to make our colleagues enthusiastic about their work. If you watch a couple of TED videos or if you read a couple of books on management, you will see that we...