Unpredictable?

By Guillaume Filion, filed under

information,

randomness,

random generator,

probability.

•

•

What if I told you to choose a card at random? Simply choose one from a standard deck of 52 cards, think of a card, do not draw one from a real deck... Make sure you have one in mind before you read on.

The illusion

Assuming that you have a card in mind, I bet you chose the Ace of Spades. Of course, I don't know the card you were thinking of, but that is the one I bet on. Every textbook on probability says that I have a 1/52 chance of having guessed right. Actually, that is not quite true... About one out of 4 readers will choose the Ace of Spades, and one out of seven will choose the Queen of Hearts, as shown by a study of the researcher in psychology of magic Jay Olson and his collaborators.

In this experiment, is the choice of a card purely random? And what does random mean anyway? Even if purely random is not properly defined, most would agree that it means no information at all, or completely unpredictable. The outcome of the experiment above is clearly not what you would call purely random. But statisticians do not use the term purely random. The statistical jargon for it is uniform. The outcome of the above experiment is actually random, but not uniform, which means that all the cards do not have the same probability of being picked. The uniform distribution carries the smallest amount of Shannon information, which means that every other random distribution will represent a relative gain of information (click on the Penrose triangle for a detailed computation of the information gain in the above experiment).

The point of this little game was to illustrate the major difficulty with uniform randomness: we think it is easy to be unpredictable. But this is hard. This is very hard. Mentalists make this point in their performances, which makes them so entertaining. And in case you are still convinced of your capacity to be unpredictable, I invite you to play rock-paper-scissors against a computer.

Random generators

OK, the human brain is bad at producing uniform randomness. So what? Why do we need randomness? Just for the thrill of gambling?

The thrill of a good poker game is sure very nice, but randomness is also a huge industrial stake. Producing unpredictable numbers has become very important for cryptography and information security in general. The basic requirement of a password is to be hard to guess, right? Well, it is the same for information; it is protected if it is hard to guess, and uniform randomness is hardest to guess. So information is secure when it looks random to everyone else, except you and the people you want to share it with.

To give an example, the Diffie-Hellman key exchange protocol is an elegant cryptographic algorithm that allows two people (or more likely computers) to create a common secret number that is unknown from everyone else, even if the exchange of information is intercepted. The idea is that each person chooses a secret number, encrypts it and sends it to the other. The knowledge of your own secret number and the encrypted number of your partner is sufficient to coin a common secret number, whereas the knowledge of both encrypted numbers (i.e. if messages are intercepted) is insufficient. The common secret number can then be used to encrypt new messages, and is known as a cryptographic key. The whole process relies on one important detail: that the pair of secret numbers chosen initially is unpredictable, otherwise it is fairly easy to hack such a protocol.

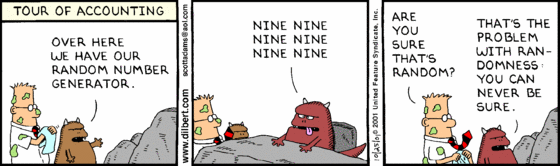

And now comes the catch: random generators are not random. Today's computers are fully deterministic and for that reason unable to create true randomness. Instead, most machines use pseudo-random generators. A pseudo-random generator is a fully deterministic algorithm to produce numbers that look randomly uniform. Yet, the history of pseudo-random number generators has its dark spots. Most infamous among the bad random generators is RANDU, which was the pseudo-random generator of IBM mainframes in the 1960's. It turned out that RANDU showed a perfectly regular pattern in the triplets of numbers it produced, which initially escaped its designers... Quite predictable for a random generator.

The deterministic nature of pseudo random generators may appear as a limitation, but it can turn out to be useful. For instance, pseudo random generators are used in reproducible research to document simulations and stochastic algorithms in ways that can be reproduced by the community. In most research projects, reproducibility matters more than absolute randomness so pseudo-random generators will stick around for quite some time (as of today, the Mersenne twister is state of the art).

But pseudo-randomness is not the only option available. You can also buy your random numbers. Some companies claim to use quantum physics hardware (for example IDQ and Comscire). Or you can get them for free from the server random.org, which makes them from atmospheric variables.

That all sounds good, but how do we know numbers are random? Well, that's the problem with randomness: you can never be sure. There are only empirical tests of random number generators such as the die hard and die harder batteries of tests (in case you wondered, the pun is not accidental), or the more difficult to pass TestU01. Those tests are a collection of sanity checks for all sorts of undesirable properties, like bias, autocorrelation, clustering etc, but no pseudo random generator passes all the tests of the TestU01. If humans find it hard to be unpredictable, it is even harder for machines, so take it easy if you lost at rock-paper-scissors.